5 Reasons Cutting Corners in Coding can Risk Everything

Is it me, or does it seem like there are more online scams this year? Remember the 16 billion-credential “mother-of-all leaks”? In June 2025 researchers found a compilation dump containing 16 billion fresh usernames, passwords and session cookies, the largest credential leak ever recorded. Or how about the ever present toll texts on your phone?

Okay, maybe it’s not me. Turns out, AI-enabled crimes are up 456% since last year. Former federal prosecutor, Gary Redbord says, “AI has removed the human bottle neck from scams, from cybercrime, and other types of illicit activity.” As a former hacker, I am always on the lookout for scammers. They are on the bleeding edge of tech, so as technology progresses with AI, so does the crime game of malicious actors. Here are 5 cyber security threats to be aware of, and ways you can work around them:

1. API Keys in Code

API keys in code are passwords for your app that control access to sensitive data.

When attackers get a hold of your API passwords, they have access to your sensitive data, and can do any number of malicious things, like create data breaches, service disruptions, and steal your money.

How to respond:

Unfortunately, it’s hard to prevent people getting hold of your API keys. Criminals gotta eat, too. But you can learn to respond quickly to an attack. You can set up firewalls to block an attack. You can also do what we do to prevent attacks: you can use your own private chatbot. That would eliminate a huge vulnerability in your system—you are no longer exposed to cyber criminals online. Click here to find out how you can set up your private chatbot with DeepSeek Unchained.

2. Slopsquatting

Slopsquatting comes from AI code assistants hallucinating or suggesting software packages that don’t exist. Hackers watch for these made-up packages, quickly register those names, and then can upload malware under the guise of the made-up products. If a developer blindly trusts the AI’s recommendations and incorporates these packages, they unknowingly inject malicious code into the code base.

How to respond:

There are several responses to slopsquatting:

- Double-check every add-on

If the AI names a new software “package,” look it up on the official sites (PyPI, npm, etc.) to be sure it’s real. - Let security scanners do the work

Turn on tools like Dependabot or Snyk so they automatically flag missing or shady packages. - Freeze the versions

Lock package versions so a surprise update can’t sneak in a bad actor. - Make the AI double-check itself

Ask the assistant to list every package it used and prove each one exists. - Tame the AI when needed

Turn down its “creativity” setting or retrain it if it keeps hallucinating.

3. Code Removal

Code removal is when the AI generates your code with chunks of it missing. This can happen if it 1. hallucinates an instruction to delete some lines of code from the codebase, or,

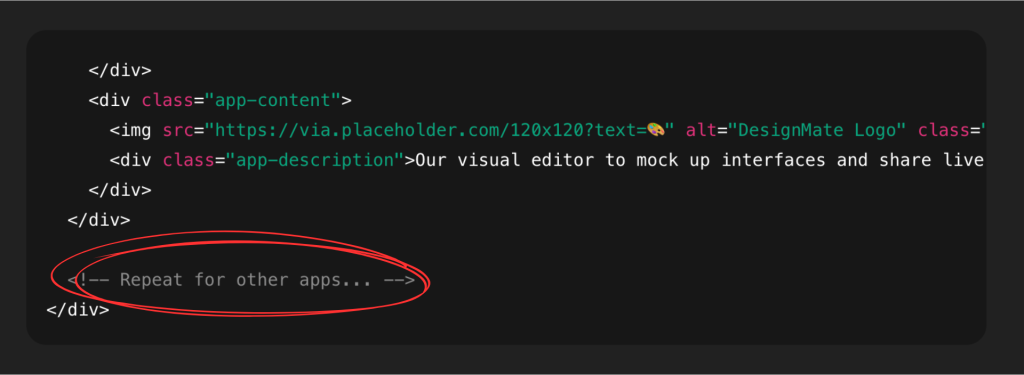

2. instead of writing all the code it should, inserts a comment like

the code from here remains the same as before

which is where the AI gets lazy and doesn’t write everything it was supposed to.

For example,

This is really easy to miss if you’re working on code that goes on for several pages.

Code removal leads to two cybersecurity problems: destruction of data and vulnerability exploitation. Malware can destroy data and create backdoors to exploit vulnerabilities in code and give attackers access to sensitive data.

How to respond:

Here are 3 things to keep in mind when coding with AI to minimize this:

1. Always keep backups of your code.

Use version control (Git). Make commits often. Push to GitHub or another cloud repo. Before asking AI to rewrite a file, save a copy. Even a quick cp app.js app_backup.js helps.

2. Store a read-only backup somewhere else.

Don’t rely only on your laptop. Sync to Dropbox, Google Drive, or a private repo.

3. Add guardrails to your workflow.

Use AI in a branch, not directly in main. Review and test before merging.

4. Credential Harvesting

Attackers get usernames through any means necessary, including phishing, social engineering, or exploiting vulnerabilities in apps that store or process credentials. This is similar to the risk you face with API keys in code, but credential harvesting has to do with email contacts rather than API keys.

Once inside a network with valid credentials, attackers can do many things, including

- Plant ransomware.

- Intercept communications.

- Conduct long-term surveillance.

How to respond:

When this happened to me, I took these steps:

I barricaded the front door with phishing-resistant MFA, watched the windows with disciplined logging and alerting, and have a rehearsed plan to slam every entry shut the moment a thief is spotted.

I had to do the following as well:

- Use phishing-resistant MFA—a hardware security key, passkey, or at worst an authenticator app; never rely on SMS.

- Let a password manager create and store unique passwords; don’t reuse them.

- Keep every device and browser patched and run reputable anti-malware plus built-in OS protections.

- Stay alert to phishing: inspect links, sender address, spelling, and urgency cues before a single click.

- Monitor your identity: freeze your credit, watch accounts for new-device logins, and change any password you even suspect was exposed.

5. Extra Code Based on Hallucinated Requirements

If you’re vibe coding an app, an LLM might hallucinate a requirement that didn’t previously exist, like “the software must have a login page”, and then proceed to create and integrate a login page you didn’t ask for. Hallucinations can stem from a lack of sufficient training data, the model’s tendency to overgeneralize, or its inability to differentiate between real and imagined information.

How to respond

Some of the most effective responses to this cyber security threat are:

- Improving Data quality and relevance

- Employing RAG

- Reinforcement Learning from Human Feedback (RLHF)

- Prompt EngineeringTechniques (clear instructions, examples, chain of thought, restrict external information

- Fine-tuning or training your own models

We have solved RAG with our product Dabarqus in our private chatbot, DeepSeek Unchained. Download it here.

Conclusion

AI is a tool, not an evil device. Criminals have used it for harm, but you can work around them. Know what tactics attackers use to stay a step ahead of them, and thwart their malicious ways. RAG and fine-tuning are ways to employ AI for good. The faster business owners stop cybercrime in its tracks, the sooner attackers go looking for a new hustle.